Published: 2026-03-28 · 22 min read

How to Choose the Right AI Model for Your Video Project

2026-ready guide: align ads, portraits, cinematic B-roll, and long-form stories with the right AI video model—compare outputs and effects in one Vivify AI workflow.

Choosing an AI video model in 2026 is less about chasing a leaderboard and more about matching model strengths to what you ship. A hero product shot, a talking-head creator clip, and a sweeping aerial mood piece stress different capabilities: texture fidelity, temporal consistency, motion realism, and maximum duration—sometimes mutually exclusive in a single pass.

Start from the deliverable, not the hype cycle

- Ads and promos: prioritize first-frame clarity, punchy motion, and legible branding beats. Watch time is won when viewers instantly understand what they are looking at.

- Portraits and creators: prioritize identity stability and skin texture; micro-flicker across frames erodes trust faster than a slightly soft focus.

- Cinematic b-roll: prioritize lighting coherence and optically plausible parallax—your audience has seen thousands of AI clips and spots “impossible” light instantly.

- Longer narratives: prioritize duration headroom and continuity; a gorgeous two-second clip that cannot extend is a dead end for serialized content.

Map models to goals (and measure honestly)

High-fidelity models excel at dense scenes and fine detail; faster models help you explore hooks before you burn credits on a polish pass. Keep a lightweight scorecard: subject consistency, motion quality, artifact rate, and time-to-preview. Trend-ready teams often run parallel A/B tests across two model families with the same brief—same subject, same lighting vocabulary, different motion engines—then standardize effects in post.

Budget, credits, and iteration cadence

Ask before you lock a model: How many variants can we afford this week? If your pipeline needs ten rapid hook tests for TikTok or Reels, bias toward models that return readable previews quickly and fail gracefully. If you need one centerpiece for a paid media flight, invest in the highest-fidelity tier you can and plan color correction and grain matching afterward.

Vivify AI advantage

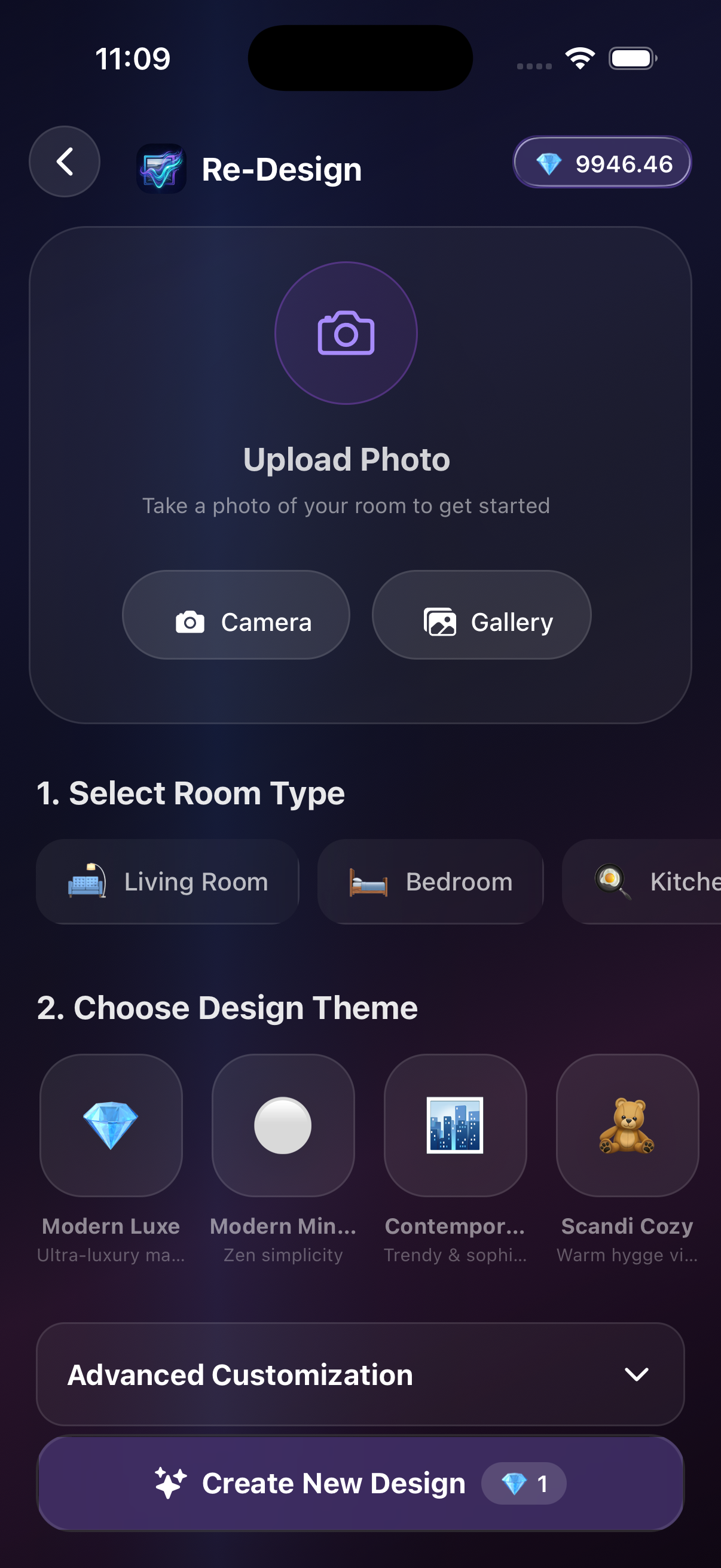

Vivify AI brings multiple premium models into one app so you can generate comparable variants, pick a winner, and apply a consistent effects pass—without juggling separate tools or browser tabs on mobile. That is the difference between “experimenting with AI” and running a repeatable content system.

Inside Vivify

Compare engines on one screen—pick a winner, then align effects before you spend credits on long renders.

Practical workflow

- Write a neutral brief (subject, environment, motion, lighting).

- Generate two variants—either different models or the same model with adjusted motion emphasis.

- Pick the winner, then apply one unified polish (effects, upscale, aspect ratio).

FAQ

Should I always use the newest model?

Not necessarily—match model strengths to your scene type and review recent outputs for your niche.

How do I avoid inconsistent characters across clips?

Keep prompts aligned on wardrobe, lighting, and camera distance; regenerate short segments rather than over-stuffing one prompt.

Takeaways

- Select models by deliverable and measurable quality, not launch buzz.

- Compare two variants before expensive post work.

- Use Vivify AI to keep comparison and effects in one fast mobile workflow.